·

9 min read

Write to Fresh Jots from SQL — Postgres, MySQL, SQL Server

Write to Fresh Jots from SQL — Postgres, MySQL, SQL Server

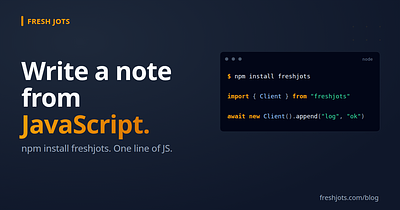

A spin-off from Write a note from any project, focused on databases. Four flavors, in order from "drop-in" to "more setup, more durable":

- **Postgres + `http` extension** — synchronous HTTP from SQL. Cleanest for one-off scripts and admin tasks.

- **Postgres + `pg_net` extension** — *asynchronous* HTTP from SQL. The right answer for triggers.

- **MySQL / SQL Server / anything else** — write to an outbox table from the trigger, drain it with an external worker.

- **Azure SQL** — has `sp_invoke_external_rest_endpoint` natively; no extensions, no outbox.

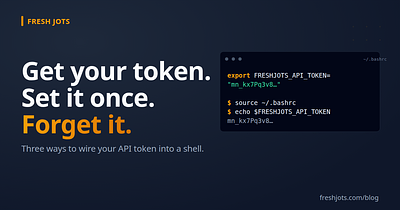

You'll need a Fresh Jots API token. If you don't have one, see Get & set your Fresh Jots API token.

Before you wire HTTP into a transaction — read this

Before you wire HTTP into a transaction — read this

**Don't make synchronous outbound HTTP calls inside a transaction.** It's the same class of bug as sending an email inside a transaction: easy to write, painful in production.

Three failure modes that bite:

1. **The HTTP target is slow.** Your `INSERT` now holds row locks for 5 seconds. Lock-wait timeouts cascade.

2. **The HTTP target is down.** Your transaction stalls. Connection pool fills up. Application starts 5xx-ing.

3. **The transaction rolls back after the HTTP call succeeds.** You've appended a row to your Fresh Jots note that says a thing happened that, as far as the database is concerned, didn't.

Two ways to avoid all three:

- **Use `pg_net`** if you're on Postgres. It's *async by design* — the call returns immediately, the request goes through a worker, and a transaction rollback is moot because the request was queued, not made.

- **Outbox pattern** if you're not. Insert into a queue table inside the transaction (atomic with the rest of the work), drain it with an external poller.

Synchronous HTTP from SQL has exactly one legitimate use: ad-hoc admin scripts you run by hand, where you'd notice and care if the HTTP target was unreachable. For triggers, never.

The rest of this post follows that rule.

Postgres — the `http` extension (synchronous, for admin scripts)

Postgres — the `http` extension (synchronous, for admin scripts)

The `pgsql-http` extension lets you make HTTP calls from SQL. Install it once:

CREATE EXTENSION http;

Then store your token in a database setting so it's not in plain SQL files:

-- Once, as a superuser. The setting persists across sessions. ALTER DATABASE my_app SET app.fjots_token = 'mn_yourrealtokenhere';

(On managed Postgres without superuser access, store it in a tiny config table instead — `SELECT value FROM app_settings WHERE key = 'fjots_token'`.)

Append a line from a `psql` session, a migration script, or a manual one-off:

SELECT status, content::text

FROM http_post(

'https://freshjots.com/api/v1/notes/by-filename/db-events/append',

jsonb_build_object('text', 'manual backup verified at ' || now())::text,

'application/json',

ARRAY[http_header('Authorization',

'Bearer ' || current_setting('app.fjots_token'))]

);`status` will be `200` on success and the body is the updated-note envelope.

Useful when you want to script a one-off ("write a snapshot of `SELECT count(*) FROM users` to a note every Monday from a `psql` cron job"). **Not** what you want inside a trigger.

Postgres — `pg_net` (asynchronous, for triggers)

Postgres — `pg_net` (asynchronous, for triggers)

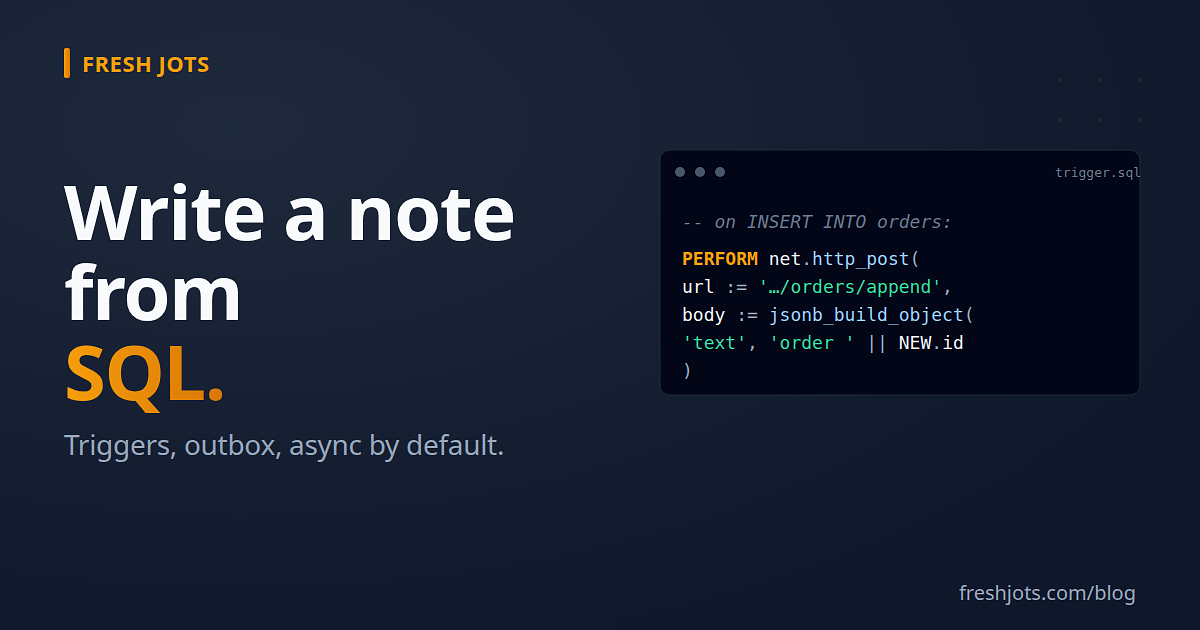

The `pg_net` extension (Supabase's, BSD-licensed) makes HTTP calls *outside* the transaction via a background worker. The trigger inserts a job and returns immediately; the worker performs the actual HTTP call seconds later.

Install:

CREATE EXTENSION pg_net; -- pg_net's worker process needs to be running — on Supabase it's -- managed automatically; on self-hosted Postgres see the pg_net README.

Same `app.fjots_token` setting trick from above applies.

A trigger that logs every new order to a Fresh Jots note:

CREATE OR REPLACE FUNCTION fjots_log_order()

RETURNS TRIGGER AS $$

BEGIN

PERFORM net.http_post(

url := 'https://freshjots.com/api/v1/notes/by-filename/orders/append',

body := jsonb_build_object(

'text', format('order %s — %s @ %s',

NEW.id, NEW.customer_email, NEW.created_at)

),

headers := jsonb_build_object(

'Authorization', 'Bearer ' || current_setting('app.fjots_token'),

'Content-Type', 'application/json'

)

);

RETURN NEW;

END;

$$ LANGUAGE plpgsql;

CREATE TRIGGER fjots_log_order_trg

AFTER INSERT ON orders

FOR EACH ROWEXECUTE FUNCTION fjots_log_order();What this gives you:

- The `INSERT INTO orders ...` returns in the usual ~1 ms. The HTTP call happens in the background.

- A network blip or a Fresh Jots outage doesn't roll back the order — it produces a failed pg_net job you can retry.

- The `orders` Fresh Jots note becomes a real-time stream of every new order. Searchable, exportable, pageable.

Inspecting and retrying failed jobs

Inspecting and retrying failed jobs

-- Recent requests SELECT id, status_code, error_msg, created FROM net._http_response ORDER BY created DESC LIMIT 20; -- Drop completed ones to keep the table small (pg_net has a cleanup -- worker for this, but you can also do it manually): SELECT net.http_collect_response(id) FROM net._http_response WHERE created < now() - interval '7 days';

For everything-or-nothing semantics ("the note write *must* succeed or the order doesn't count"), use the outbox pattern below — pg_net is fire-and-forget; if you need delivery guarantees, you need a queue with explicit retry.

MySQL / SQL Server / generic — the outbox pattern

MySQL / SQL Server / generic — the outbox pattern

MySQL has no native HTTP. SQL Server's `xp_cmdshell + curl` is usually disabled in production, and its CLR HTTP route is complex. The portable answer is the **outbox pattern**: write to a queue table inside the transaction, drain it with a tiny external worker.

The outbox table

The outbox table

-- MySQL syntax. PG/MSSQL: change types as appropriate. CREATE TABLE fjots_outbox ( id BIGINT UNSIGNED NOT NULL AUTO_INCREMENT PRIMARY KEY, filename VARCHAR(255) NOT NULL, body_text TEXT NOT NULL, created_at TIMESTAMP NOT NULL DEFAULT CURRENT_TIMESTAMP, sent_at TIMESTAMP NULL, last_error TEXT NULL, INDEX (sent_at, id) );

The trigger (MySQL example — applies cleanly to others)

The trigger (MySQL example — applies cleanly to others)

DELIMITER $$

CREATE TRIGGER log_orders_to_fjots

AFTER INSERT ON orders

FOR EACH ROW

BEGIN

INSERT INTO fjots_outbox (filename, body_text)

VALUES (

'orders',

CONCAT('order ', NEW.id, ' — ', NEW.customer_email, ' @ ', NEW.created_at) );

END$$

DELIMITER ;The trigger is now **only** writing to a local table. Same transaction, atomic with the order — if the order rolls back, the outbox row goes with it.

The drainer (Python, ~30 lines)

The drainer (Python, ~30 lines)

# fjots_outbox_drainer.py

import os

import time

import freshjots # pip install freshjots

import pymysql # or psycopg / pyodbc / etc.

DB = pymysql.connect(host="db", user="…", password="…", database="my_app")

client = freshjots.Client() # reads FRESHJOTS_TOKEN

def drain_once():

with DB.cursor() as cur:

cur.execute(

"SELECT id, filename, body_text "

"FROM fjots_outbox WHERE sent_at IS NULL "

"ORDER BY id LIMIT 100 FOR UPDATE SKIP LOCKED"

)

rows = cur.fetchall()

for row_id, filename, text in rows:

try:

client.append(filename, text)

cur.execute("UPDATE fjots_outbox SET sent_at = NOW() WHERE id = %s", (row_id,))

except freshjots.ApiError as e:

cur.execute(

"UPDATE fjots_outbox SET last_error = %s WHERE id = %s",

(f"{e.status} {e.code}: {e}", row_id),

)

DB.commit()

while True:

drain_once()

time.sleep(2)`FOR UPDATE SKIP LOCKED` means you can run multiple drainer processes for throughput without them stepping on each other's rows. The drainer's worst-case failure mode is "lag" — rows accumulate, get written when the drainer recovers. The order itself is never lost.

The drainer doesn't need to be in Python. Bash + `curl` + a `WHILE` loop works. Node + a setInterval works. Whatever your sidecar runtime is, the SQL side is identical.

SQL Server — `sp_invoke_external_rest_endpoint` (Azure SQL only)

SQL Server — `sp_invoke_external_rest_endpoint` (Azure SQL only)

Azure SQL Database (not on-prem SQL Server) ships with a native call you can use directly:

DECLARE @response NVARCHAR(MAX);

DECLARE @body NVARCHAR(MAX) = N'{"text":"backup completed"}';

EXEC sp_invoke_external_rest_endpoint

@url = N'https://freshjots.com/api/v1/notes/by-filename/db-events/append',

@method = 'POST',

@headers = N'{"Authorization":"Bearer mn_yourtoken","Content-Type":"application/json"}',

@payload = @body,

@response = @response OUTPUT;

SELECT JSON_VALUE(@response, '$.response.status.http.code') AS http_code;This is synchronous, so the trigger caveats above still apply — use it for admin scripts, not inside triggers. For SQL Server triggers, use the outbox pattern.

Storing the token: SQL Server's `DATABASE SCOPED CREDENTIAL` is the right place. See Microsoft's docs for `sp_invoke_external_rest_endpoint` + credentials.

For on-prem / SQL Server pre-2022: the [outbox pattern](#mysql--sql-server--generic--the-outbox-pattern) is the only sane answer.

Real patterns

Real patterns

Every UPDATE on `users.email` writes a line. The Fresh Jots note becomes a tamper-evident audit trail your DBAs can't quietly edit.

-- Postgres + pg_net

CREATE OR REPLACE FUNCTION fjots_audit_email_change()

RETURNS TRIGGER AS $$

BEGIN

IF OLD.email IS DISTINCT FROM NEW.email THEN

PERFORM net.http_post(

url := 'https://freshjots.com/api/v1/notes/by-filename/email-audit/append',

body := jsonb_build_object(

'text', format('user %s: %s → %s by %s @ %s',

NEW.id, OLD.email, NEW.email,

current_user, now())

),

headers := jsonb_build_object(

'Authorization', 'Bearer ' || current_setting('app.fjots_token'),

'Content-Type', 'application/json'

)

);

END IF;

RETURN NEW;

END;

$$ LANGUAGE plpgsql;

CREATE TRIGGER fjots_audit_email_change_trg

AFTER UPDATE ON users

FOR EACH ROW

EXECUTE FUNCTION fjots_audit_email_change();Pair with a [dead-man-switch alert](/blog/everything-you-can-do-here) on the `email-audit` note: if no email change has happened in 30 days, the note goes silent and Fresh Jots emails you. *Useful*. If a long-quiet `email-audit` note suddenly starts seeing five changes a minute, Fresh Jots's normal real-time view in your browser surfaces it; the absence of activity is the dead-man's-switch signal.

Daily summary from a scheduled job

Daily summary from a scheduled job

A `pg_cron` job that writes a daily snapshot:

SELECT cron.schedule('fjots-daily', '0 9 * * *', $$

SELECT http_post(

'https://freshjots.com/api/v1/notes/by-filename/daily-stats/append',

jsonb_build_object(

'text', format(

'date=%s users=%s orders=%s revenue=%s',

current_date,

(SELECT count(*) FROM users WHERE created_at::date = current_date),

(SELECT count(*) FROM orders WHERE created_at::date = current_date),

(SELECT sum(amount) FROM orders WHERE created_at::date = current_date)

)

)::text,

'application/json',

ARRAY[http_header('Authorization',

'Bearer ' || current_setting('app.fjots_token'))]

);$$);Synchronous `http_post` is fine here because `pg_cron` jobs run outside user transactions. The note becomes a daily log you can search and export at month-end.

Migration journal

Migration journal

`db/migrate/*` scripts append "ran 2026_05_14_add_orders_index" to a `migration-runs` note. Searchable history of every schema change across every environment. Beats reading `schema_migrations` joined with deployment logs.

Going further

Going further

- **Other languages — same pattern, different HTTP client.** See the hub: Write a note from any project.

- **The CLI** — useful for one-off SQL→shell→note workflows. See Notes from your terminal.

- **Dead-man alerts on audit notes** — pair an `INSERT`-driven note with `append_deadline_hours` and Fresh Jots emails you when the writes go silent. See Everything you can do here.

- **`pg_net` source / docs:** github.com/supabase/pg_net.

- **`pgsql-http` source / docs:** github.com/pramsey/pgsql-http.

One trigger, one outbox table, one cron job, your whole database surface logged into searchable notes. Without setting up Kafka, without paying for Datadog, without bolting an APM agent onto a Postgres role.